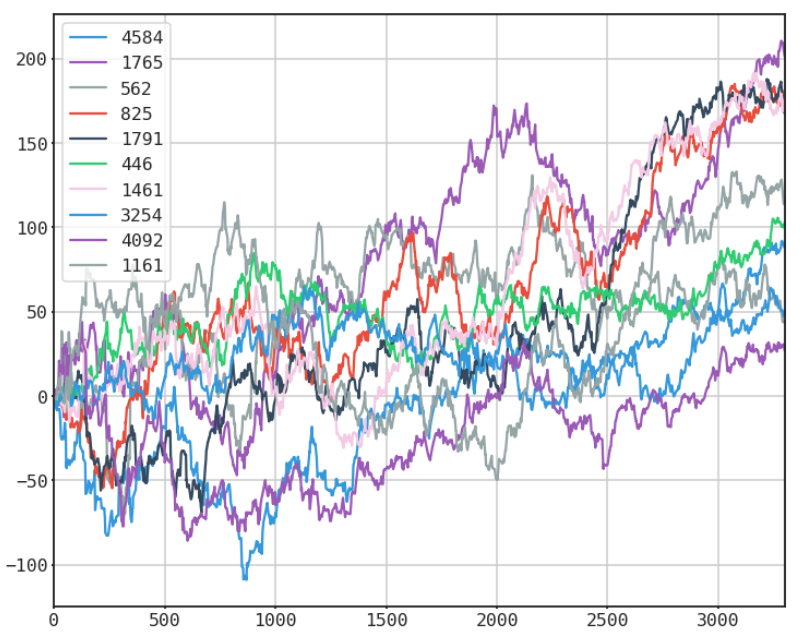

To this day the most popular article I have ever written on this blog was "How to get Free Intraday Stock Data with Netfonds". Unfortunately the Netfonds API has really declined in terms of usability, with too many popular stocks missing, and irregular trade and price quotes. Simply put, as the API went down, so did the code.

However, all hope is not lost. The wonderful people at BarChart.com have created a well documented, easily accessible API for intraday stock data and even near real-time quote access. The only caveat is that you must request access to get a personal API key. Again this is FREE, and the process is extremely simple and straightforward. I think I received my API key within the same day, max 24 hours.

Step 1: Go to http://www.barchartondemand.com/api.php and request an API key.

Step 2: Use or modify my code to get FREE intraday stock data.

Something to note, in this example I use the SP500 components as my list of stock symbols. I covered how to get fresh SPY holdings data directly from the provider in a previous post titled "GET FREE FINANCIAL DATA W/ PYTHON (STATE STREET ETF HOLDINGS - SPY)". Now onto the code...

First I import the necessary modules.